Server.py provides a simple HTTP API for serving the chatbot. The model can be exported by copying the following files in a folder:Īnd run main.py after setting the proper model directory. At the end of the execution you can interact with the chatbot. An open-domain chatbot needs the knowledge and.

Set proper MODEL_DIR and CHECKPOINT_NAME in predict.py and run main.py Pretrained model checkpoint

#Meena chatbot download#

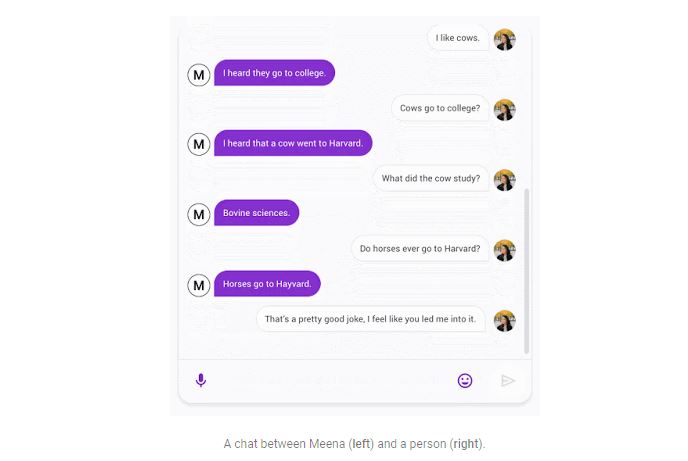

Otherwise download the following model and extract it. Simply run notebook meena_chatbot_inference.ipynb. However Opensubtitles provide very large datasets in many languages. The dataset used however does not represent well normal conversations between humans. Even human conversation partners only scored 86 in. Our perplexity score shows that our bot is better than other chatbots such as Cleverbot and DialoGPT: Googles Meena chatbot scores low on 'perplexity,' which is good, meaning it has less of a hard time finding the right word. Meena got an SSA score of 79, compared with 56 for Mitsuku, a state-of-the-art chatbot that has won the Loebner Prize for the last four years. Specificity Average which is correlated with the "human likeness" of the chatbot. The paper shows a correlation between perplexity score and the Sensibleness and The final perplexity score is 10.4 which is very close to the perplexity score achieved by Google's meena chatbot 10.2.

Here's the plot of the evaluation loss during training. The learning rate starts at 0.01 and remains constant for 10k steps then decay with the inverse square root of the number of steps. Here are the results after training the model on 40M sentences of the OpenSubtitles dataset in the italian language. The human-like chatbot, as Google claims, is more sensible and better compared with that of other well-known open-domain chatbots including Mitsuku, Cleverbot, XiaoIce, and DialoGPT. The optimizer used is Adafactor with the same training rate schedule as described in the paper. Google just launched a multi-turn open-domain chatbot named Meena that learns to respond sensibly to a given conversational context.

Similarly to the work done in the paper, this model consists of 1 encoder block and 12 decoder blocks for a total of 108M parameters. The training set used is the OpenSubtitles corpus in the Italian language. Here's my attempt at recreating Meena, a state of the art chatbot developed by Google Research and described in the paper Towards a Human-like Open-Domain Chatbot.įor this implementation I used the tensor2tensor deep learning library, using an evolved transformer model as described in the paper.